A fundamental assumption underpins our increasingly intimate interactions with AI chatbots like ChatGPT, Google Gemini, and Claude: the belief that our conversations are private. We confess insecurities, seek medical advice, and explore personal dilemmas, trusting the digital void with our most sensitive thoughts. New and alarming evidence shatters this assumption, revealing that the prompts you type the raw, unvarnished questions and confessions are being systematically captured, packaged, and sold to third-party companies. This isn’t about metadata or usage patterns. It’s about the actual content of your private queries becoming a commodity in a burgeoning data marketplace, raising profound questions about consent, ethics, and the future of human-AI interaction.

The Discovery: Profound and the “Prompt Volumes” Service

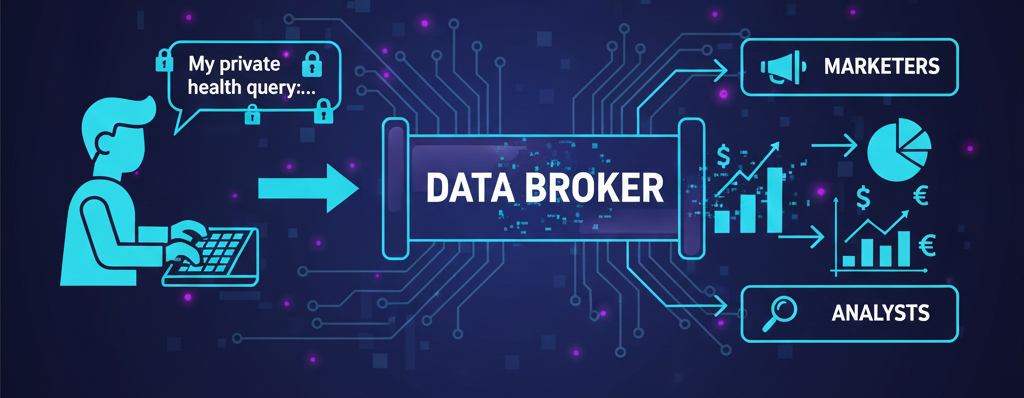

The mechanism of this data extraction centers on a New York-based analytics firm named Profound and its product, “Prompt Volumes.” This service operates as a commercial data feed, licensing what it describes as vast quantities of anonymized user prompts from major AI platforms to corporate clients, including marketers and analysts. According to investigations by The Washington Post and other outlets, the existence of this service confirms that the text users input into chatbots has tangible monetary value beyond improving the AI models themselves. Profound’s stated methodology involves “double-opt-in consumer panels,” a term that suggests users have consented, albeit through potentially opaque terms-of-service agreements with third-party data brokers or survey panels, not directly with the AI companies. The precise technical pipeline whether data is intercepted, provided via partnership, or scraped remains unclear, deepening the concern.

The Nature of the Data: A Uniquely Sensitive Treasure Trove

The true gravity of the situation becomes apparent upon examining the type of data being traded. A review of promotional materials for services like Prompt Volumes reveals that the dataset is not comprised of benign, factual inquiries. Instead, it is a deeply personal and often vulnerable archive. Users consistently turn to AI chatbots as digital confessors or crisis counselors, asking for guidance on:

- Medical and Mental Health: Detailed queries about specific symptoms, psychiatric conditions, medication side effects, and suicidal ideation.

- Personal and Relationship Crises: Questions about infidelity, sexual health, infertility, addiction, and experiences of abuse.

- Legal and Financial Hardship: Confessions about crimes, searches for legal loopholes, and detailed disclosures of debt or bankruptcy.

- Identity and Belief: Explorations of gender identity, religious doubt, and political extremism.

This creates a dataset of unparalleled sensitivity. Unlike search engine queries, which are often shorthand and fragmented, chatbot prompts are conversational, narrative, and richly detailed. People articulate their fears and circumstances in full sentences, creating a shockingly intimate portrait of their inner lives. For data brokers and marketers, this represents a “gold standard” of psychological and behavioral insight. For the user, it represents a catastrophic privacy violation.

Deconstructing the “Anonymized” Data Myth and Its Risks

Companies like Profound assert that the data is “anonymized,” but experts in digital privacy consistently warn that this term is frequently misleading. True anonymization of rich textual data is exceptionally difficult. A detailed prompt containing unique life details—such as a rare medical condition combined with a specific location and age can easily function as a digital fingerprint, making re-identification possible. This risk is especially pronounced when such data is cross-referenced with other available datasets. Furthermore, the harm extends beyond personal identification. The aggregate data can be used to train manipulative advertising algorithms, identify communities in crisis for predatory financial targeting, or influence public opinion by mapping collective anxieties. Ultimately, the mere knowledge that such intimate data is circulating commercially can have a chilling effect, causing individuals to withhold from seeking help or exploring ideas, thereby fundamentally undermining the therapeutic potential of these tools.

Weighing the Ecosystem: Who Bears Responsibility?

The emergence of this shadow market creates a complex web of accountability. A clear analysis of the roles reveals a distributed chain of responsibility.

| The AI Companies (OpenAI, Google, Anthropic) | The Data Brokers (e.g., Profound) | The End-User |

|---|---|---|

| Must provide absolute transparency on if/how user prompt data is shared, even indirectly. Need to enforce stricter controls on APIs and third-party data access points. Should advocate for and implement end-to-end encryption for chat sessions by default. Bear ethical responsibility for the ecosystems that form around their platforms. | Operate in a legal gray area, relying on complex “opt-in” chains that may not constitute informed consent. Exploit the gap between user expectation of privacy and the fine-print reality of data licensing. Prioritize commercial value over the profound sensitivity of the psychological data they trade. | Operates under a dangerous false assumption of confidentiality. Lacks the technical literacy to understand how data flows through panels and brokers. Currently bears the ultimate burden of risk without the tools to mitigate it effectively. |

Practical Steps for Immediate User Protection

Until regulatory frameworks catch up, a paradigm shift in user behavior is urgently required. The core principle must now be: Assume your prompts are not private.

- Adopt a “Zero-Sensitivity” Rule: Never input information into a public AI chatbot that you would not feel comfortable seeing on a public billboard. This notably includes details about health, finances, legal matters, and intimate relationships.

- Use Dedicated, Secure Alternatives: For sensitive needs, seek out services designed with privacy-by-architecture. This includes open-source models run locally on your device or specialized, paid professional services that explicitly contract for confidentiality.

- Audit Permissions and Connections: Be wary of browser extensions, third-party apps, or “free” services that require access to an AI provider’s API, as these can be vectors for data scraping.

- Demand Transparency and Change: Contact AI companies directly to demand clear, unambiguous disclosures about data sharing. Additionally, support legislative efforts that explicitly cover AI prompt data and require explicit, informed consent for any secondary use.

The Path Forward: Towards Ethical AI Conversations

The exposure of the prompt data market is, without doubt, a critical inflection point. It moves the conversation about AI ethics from abstract fears to a concrete, immediate crisis of human trust. The viability of AI as a tool for personal growth and support is contingent on users believing in the sanctity of the dialogue. Therefore, companies that build this trust through verifiable privacy and transparency will define the next era. For now, the burden of vigilance falls heavily on the user. The age of the confidential digital confidant is, for the moment, over; a new, more cautious and empowered approach must thoughtfully begin.

Explore Steaktek for more upfdates.